They say there is no better place to test things than out in the real world - so that is exactly what we did! On Sunday the whole team got out on the street in Tel Aviv and explained the project to people passing by. The idea behind this street demo day was to get feedback from people who are not students at the IDC or friends, and have no previous knowledge about our project. This was also a great opportunity for us to use the application out in the world where it is supposed to be used for an extended period of time.

We put together a small stand in the street and had several phones ready so we could get people to play with them. The plan was to have some of us split off and walk down the street to catch people as they are walking, while a few stayed at the stand that drew people that way. This way people were always playing on one of the 4 phones we had, and there was always something to guess.

Throughout the day we had a lot of people stop by and play. Many were able to guess sounds that people had recorded before them, and some very creative recordings were made - including one beatboxer recording himself!

Overall people expressed interest in the game itself, however we realized that the pace of the game as it is might be a bit slow in terms of getting to the stage of recording. Also, when explaining the grand goal behind the game quite a few people were excited when the link was made to sound-recognizing applications out today like song-recognizing services.

Considering the rapid progress made on Friday, all of Saturday was spent trying to implement the advanced graphics features needed for the application - interactive panning and zooming.

From the beginning it was obvious this should be a "service" provided by the infrastructure and should be done correctly and robustly once, allowing simple use later with functions such as centerScreenOnTarget(x) or even fillScreenWithComponent(x). This was the basic idea. However before automatic zooming and panning was implemented, manual controls had to be implemented, in such a way that allowed the components to still believe they had not moved, and to figure out where the mouse was in relation to the objects, accounting for any zooming/panning currently in effect.

It turned out this was no simple task. The basic interactive zooming and panning was done quite quickly however an entire hour was lost figuring out some bugs causing the image to flicker and jump around. With this out of the way it was time to figure out where the mouse's "world coordinates" were based on its position in the window and the zoom/pan properties of the camera. Once again a few hours were lost, this time due to having to go back and review Computer Graphics formulas for camera-world view transformations, implementing them, and then finding out we had a mistake in our math and the order the transformations were being applied to the mouse coordinates to work back to world coordinates.

Whit both zoom and pan were working, the application now gives a very good idea of what the final product will be like. Only the problem of adding information text in the circles on selection remained, and this was done on Sunday.

Some photos of the final product for this weekend of coding:

Its been a crazy 24 hours, about 19 of those were spent designing and coding. Be warned - this is a long post, containing details of the process and the new framework implemented and all the new features added to the visualization application

Summary:

From the tasks set out in the beginning, the design and implementation of most of the infrastructure is complete, and so is the refactoring. Effects and animations on the circles such as mouse-over effects and pulses on selection are working, as well as sound!

Features that are missing are advanced graphics options such as zooming/panning of the visualization and text displaying inside circles on click.

Now the details:

The infrastructure is designed as follows - a new class was created to form the basis of the application, providing easy handling of Object-Oriented components in a Processing Applet. Several classes and interfaces were created to enable this. Also the infrastructure creates a wrapper around the wonderful Minim package for Processing that manages loading and playing sounds, freeing the resources once sounds are finished playing and some other features.

Once all of this was done, it was time to refactor the previous code to the new infrastructure and change it to fit the new design. Here are some photos of the design:

The pulse effect was the first new feature added after the refactoring to get used to the new infrastructure and to get our hands "dirty" with some animations and not just static objects - and the it was worth it! The new framework made it very easy to think only about the ripple and how it should behave without worrying about where it is and how to place it there.

Next sounds were added, and this took a bit of playing around with Minim, and making some back-and-forth between adding features/changing the infrastructure to provide the services we wanted, and moving back to working on the sounds of the actual application.

Last but not least - the application features a mode that automatically kicks in if users dont move the mouse for more than a preset amount of time. Once this "demo mode" is on it cycles through the sounds, selecting one at a time and activating it.

Another weekend coming up... it's time for more coding! Except this time the visualization aspect of the project is the target

Goals for this weekend:

Designing and implementing the infrastructure for the new application. It should provide some convenient services, and allow simpler handling of object-orient "components" inside a processing app without them having to register for events on their own and also doing some of the hard work of timekeeping between consecutive calls to draw() for example.

This is all based on our first experience last weekend with Processing, and in light of the much more complex aspects of the new visualization - playing sounds, animations on each circle, panning/zooming etc.

Stay tuned for updates during the weekend!

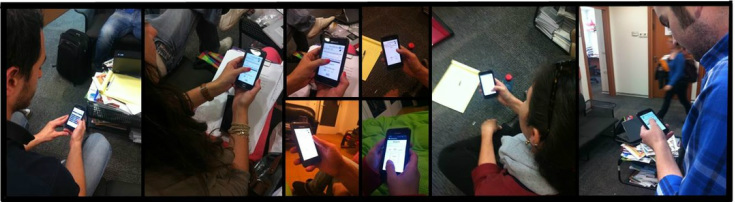

We now have, for the first time, a playable game with all parts implemented - its time to get out and test it with users again! For this purpose two of us, Lihi (Psycology) and Gadi (Computer Science) got several phones ready and got out their pen and paper and asked people to play!

We went around the IDC campus and asked students to a round of the Soundscape game - record one sound and guess one sound.

In total we tested the game with 9 users. Even after the first round we spotted several bugs that were quickly relayed back to our ever supportive group and a new version with the bug fix was rolled out within several minutes, before the 2nd willing participant was even found.

Throughout the day many improvements were made to the application and then put straight out in the field to be tested - from small bugs such as buttons not working / working when they shouldn't to changes in the UI when many users made mistakes and missed key elements of the application.

Users were also asked about what purpose would motivate them to use the game more - the sound collection database or the positive psychology. 7/9 answered that the database would motivate them more and the other 2 said they were neutral about it, but slightly preferred the sound database

Soundscape is like Draw Something, but with sounds — it's a social-mobile game that has you record a sound from a selected category, and others try to guess what sound you recorded..

To get started with Soundscape select an opponent to play with and a sound to record from a set of options. If you are standing next to one of the sounds, you can go ahead and record it to send your sound's challenge to your opponent. Choosing the "Free recording" option and you can record any sound available to you.

You and your opponent earn score when you're record a sound and guess it correctly. Each sound "level" will come with score; the more the sound is worth, the harder it may be to record and successfully guess correctly by the other person.

When you receive a sound, you can listen to it as many times as you'd like to. You will have 3 attempts to guess what sound your opponents likely recorded by selecting letters from a virtual keyboard to fill in the blanks for the sound.

You can also use bombs to eliminate some of the available letters when you're guessing to make things easier.

Over the past week or so we have started putting together the data-visualization aspect of the project. This is supposed to provide users with an interactive experience and allow them to explore and "see" the data collected from our project.

Following the design specification and with code contributions from both the Computer Science students and the Communications students we have put together the first version of our data visualization.

Below are a few shots of the visualization. The live demo allows users to click on each "bubble" to find out more information about it such as the sound it represents, total recordings for that sound and average user "pleasantness" emotion rating

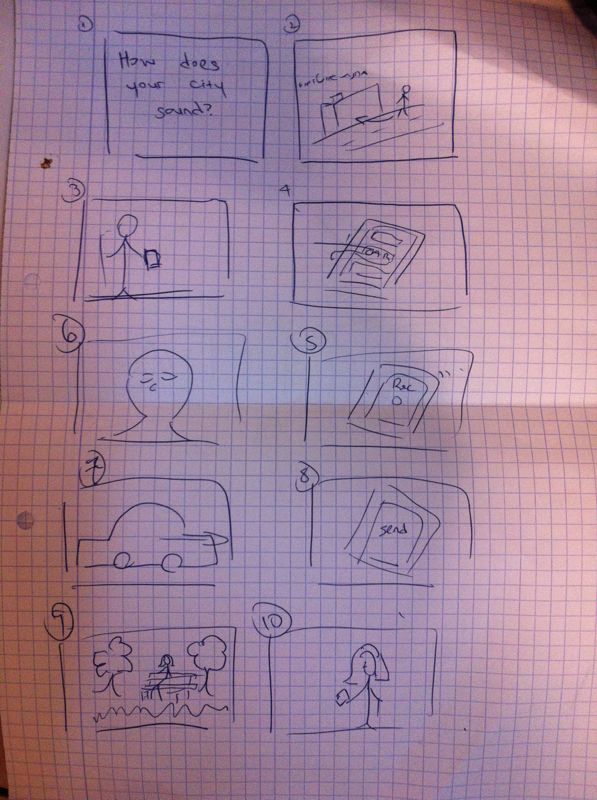

Before shooting the video for our project, we made a storyboard and edited it into an animatic.

This helped us to test whether the images work effectively together

Regarding the data visualization – we want something clear enough so that the user could “visualize the city’s soundscape”. This means that every detail in the design – color, shape, size – must convey meaning regarding these data yet be able to be interpreted in an intuitive manner. Moreover we have to account for the fact that in order for people to be engaged by the data visualization the user experience must be warm and invite users to explore the information contained in it. In order to meet these complex criteria we brainstormed and put down several preliminary design ideas in order to reach a final decision

After we developed the initial version of the app, we tested it on 10 anonymous users in order to obtain primary feedback regarding the use of the app. We explained the users the basic idea of the app and asked them to try it on their own, and to describe us the actions they are doing, while each user was asked to describe the things that interferes him and suggest things he would do otherwise.

It was very interesting to see the way the users interact with the game and to hear their feedback.

RSS Feed

RSS Feed